Huazhong University of Science and Technology

Huazhong University of Science and Technology DianGroup Robotics Lab

DianGroup Robotics LabHi! There is Diangroup Robotics Lab at Huazhong University of Science and Technology.

We focus on the research of robotics and artificial intelligence including: reinforcement learning, robot manipulation, digital twin system, etc.

Action required

Problem: The current root path of this site is "baseurl ("_config.yml.

Solution: Please set the

baseurl in _config.yml to "News

Selected Publications (view all )

ChemAI: Empowering Robots to Automate Chemical Experiments with Large Language Models

Yefan Lin*, Ziyuan Wang*, Lujia Zhang, Chengwei Zhang, Xiaojun Hei# (* equal contribution, # corresponding author)

2025 International Conference on Computing and Artificial Intelligence (ICCAI) 2025

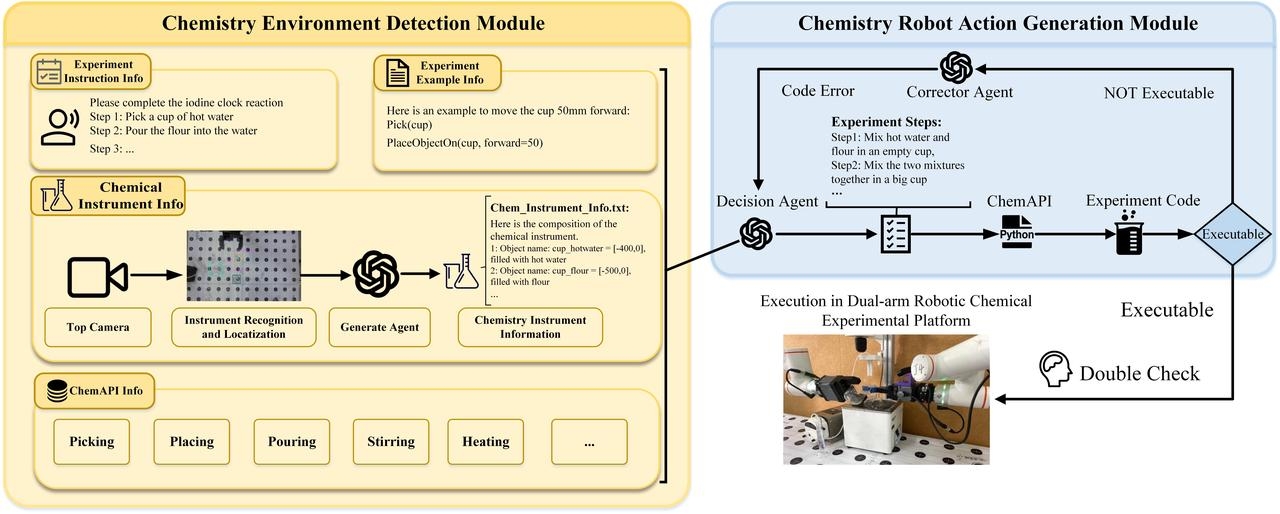

In this paper, we designed ChemAI, an autonomous robotic system for chemistry experiment automation that receives natural language from chemists as input, which can perceive the environment and execute long-term tasks including multi-step chemistry experiments.

ChemAI: Empowering Robots to Automate Chemical Experiments with Large Language Models

Yefan Lin*, Ziyuan Wang*, Lujia Zhang, Chengwei Zhang, Xiaojun Hei# (* equal contribution, # corresponding author)

2025 International Conference on Computing and Artificial Intelligence (ICCAI) 2025

In this paper, we designed ChemAI, an autonomous robotic system for chemistry experiment automation that receives natural language from chemists as input, which can perceive the environment and execute long-term tasks including multi-step chemistry experiments.

Deep Reinforcement Learning for Sim-to-Real Transfer in a Humanoid Robot Barista

Ziyuan Wang, Yefan Lin, Leyu Zhao, Jiahang Zhang, Xiaojun Hei# (# corresponding author)

IEEE International Conference on Robotics and Biomimetics (ROBIO) 2024

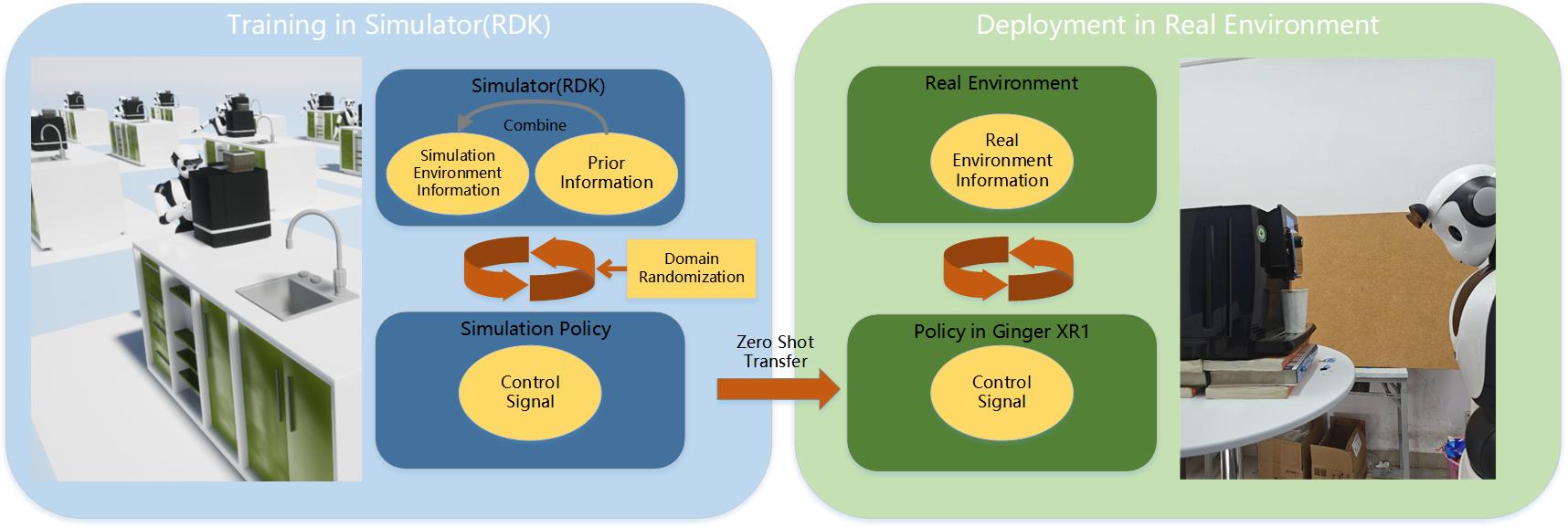

In this paper, we study the coffee-making application as an example. We proposed a reinforcement learning robot manipulation method with visual perception for filling-up the sim-to-real gap. We constructed a high-fidelity coffee making digital twin simulation environment.

Deep Reinforcement Learning for Sim-to-Real Transfer in a Humanoid Robot Barista

Ziyuan Wang, Yefan Lin, Leyu Zhao, Jiahang Zhang, Xiaojun Hei# (# corresponding author)

IEEE International Conference on Robotics and Biomimetics (ROBIO) 2024

In this paper, we study the coffee-making application as an example. We proposed a reinforcement learning robot manipulation method with visual perception for filling-up the sim-to-real gap. We constructed a high-fidelity coffee making digital twin simulation environment.

Self-Perceptive Framework: A Manipulation Framework with Visual Compensation for Zero Position Error

Ziyuan Wang, Yefan Lin, Jiahang Zhang, Changjiang Han, Xiaojun Hei# (# corresponding author)

Autonomous Robot Under review.

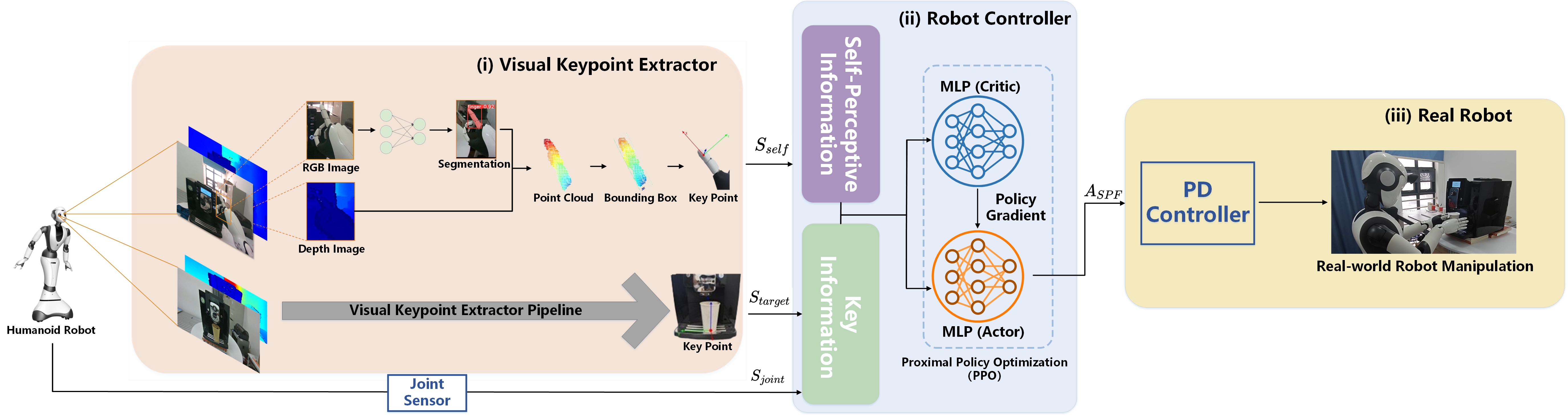

In this paper, to improve the success rate of robot manipulation tasks with zero position problem and reduce the frequency of recalibration, we proposed a robot manipulation framework, the Self-Perceptive Framework(SPF), which uses reinforcement learning and incorporates self-perceptive information.

Self-Perceptive Framework: A Manipulation Framework with Visual Compensation for Zero Position Error

Ziyuan Wang, Yefan Lin, Jiahang Zhang, Changjiang Han, Xiaojun Hei# (# corresponding author)

Autonomous Robot Under review.

In this paper, to improve the success rate of robot manipulation tasks with zero position problem and reduce the frequency of recalibration, we proposed a robot manipulation framework, the Self-Perceptive Framework(SPF), which uses reinforcement learning and incorporates self-perceptive information.